What Data Reveals About AI and Student Writing

How does AI affect student writing? New findings show AI stabilises performance gaps without necessarily strengthening core argumentation skills.

In a new research initiative titled ‘Assessing AI Tools for Argumentation in Academic Writing in the Humanities and Social Sciences’, CWC, in collaboration with the Mphasis AI & Applied Technology Lab, stepped into one of higher education’s most urgent questions: How is AI reshaping the way students think, argue, and write?

At the heart of the project was a simple yet powerful experiment. Students produced 500-word argumentative essays in response to curated prompts from English, Sociology, Political Science, History, and International Relations. In the first round, they wrote independently; in the second, they had AI assistance. Through rigorous quantitative and qualitative comparison, the research team evaluated key components of academic argumentation: clarity of introduction and thesis, strength of claims, use of evidence, structural coherence, and academic style.

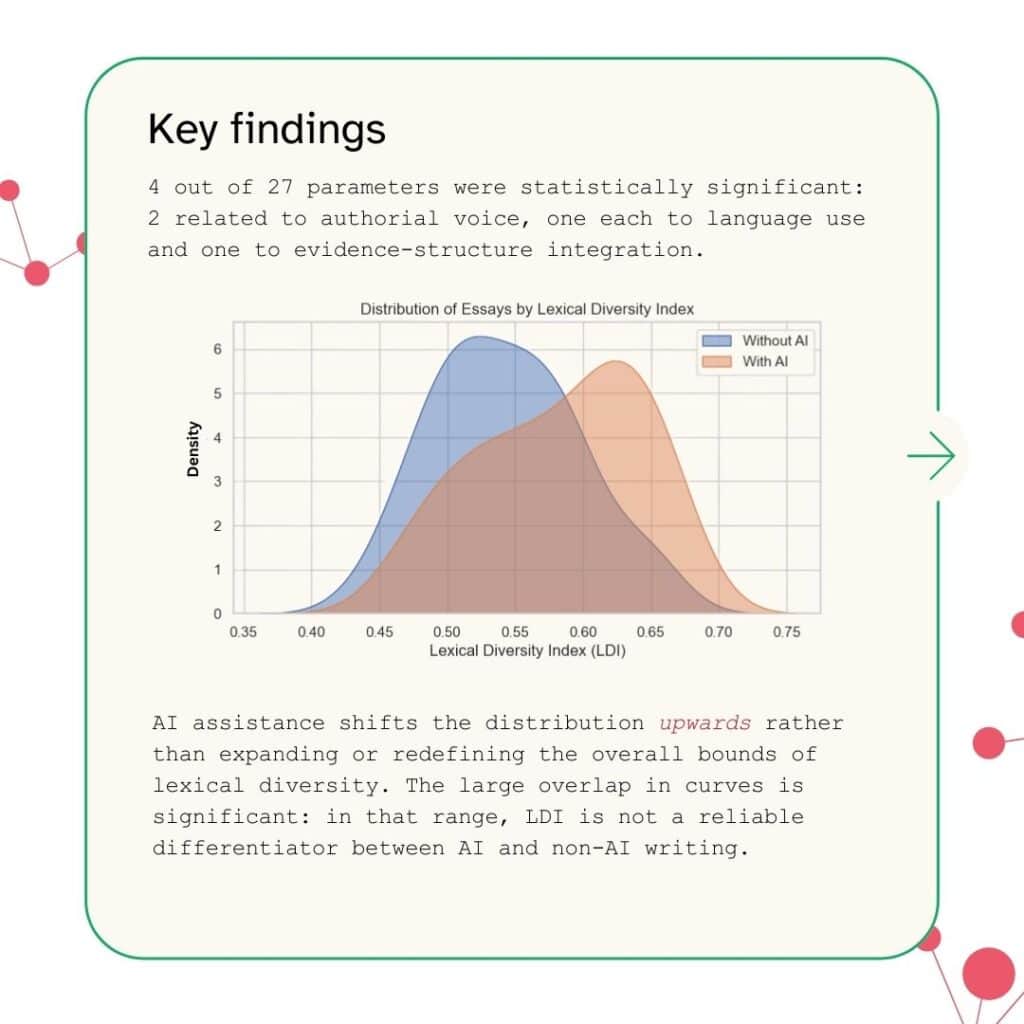

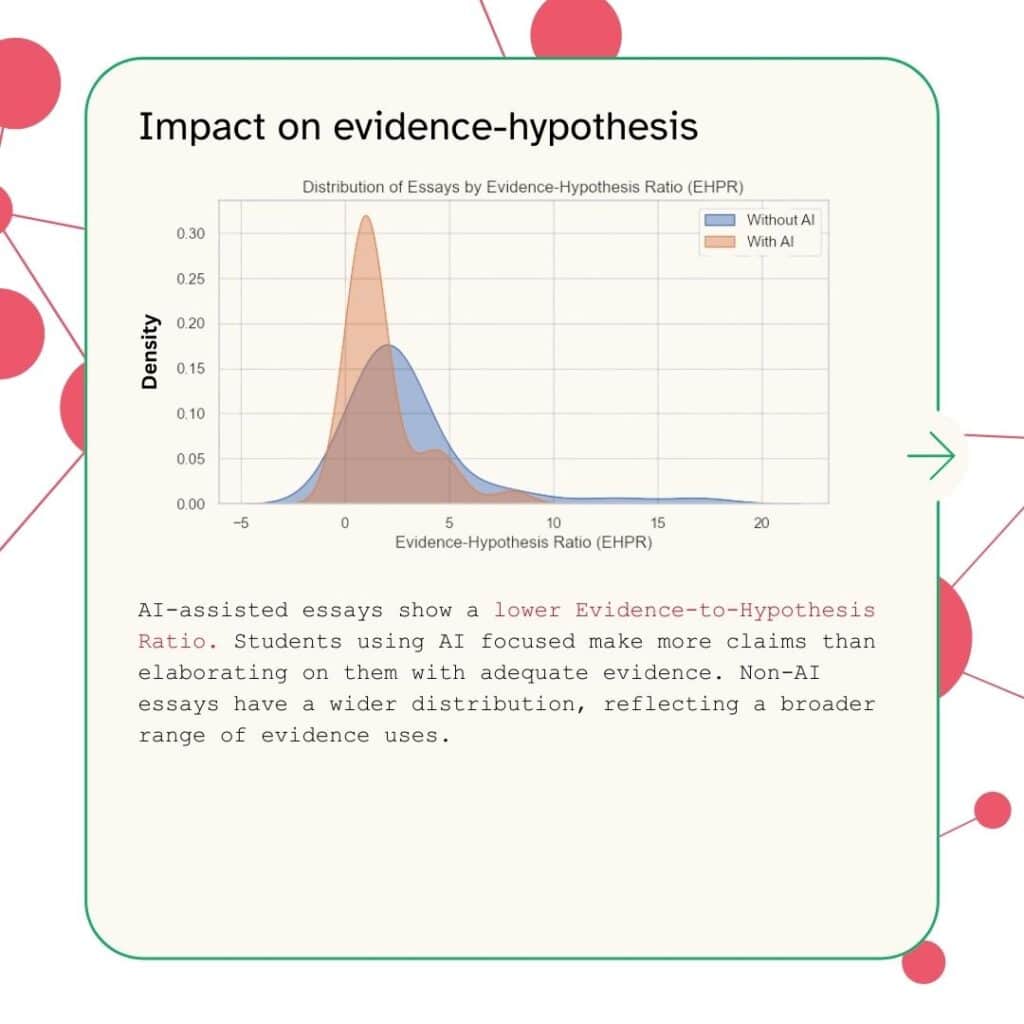

The results challenge simplistic narratives. AI did not uniformly ‘improve’ writing by shifting all students toward higher performance. Instead, it reshaped the distribution of outcomes. Median scores increased across both ELT and non-ELT groups, with gains in style and organisation among ELT students. Yet the overall score distributions continued to overlap, and non-ELT students retained a wider distribution in writing performance, with the highest levels of performance were still more frequently achieved by those with stronger baseline proficiency. AI functioned less as a universal elevator and more as a stabilising mechanism, narrowing visible gaps without necessarily fostering good argumentation.

Component-level analysis revealed further nuance. While AI reduced disparities across most criteria, the Introduction component defied the broader trend, with shifts in relative advantage between groups when AI was introduced. These mixed patterns underscore a central insight: outcomes are shaped not merely by access to AI, but by how students engage with it.

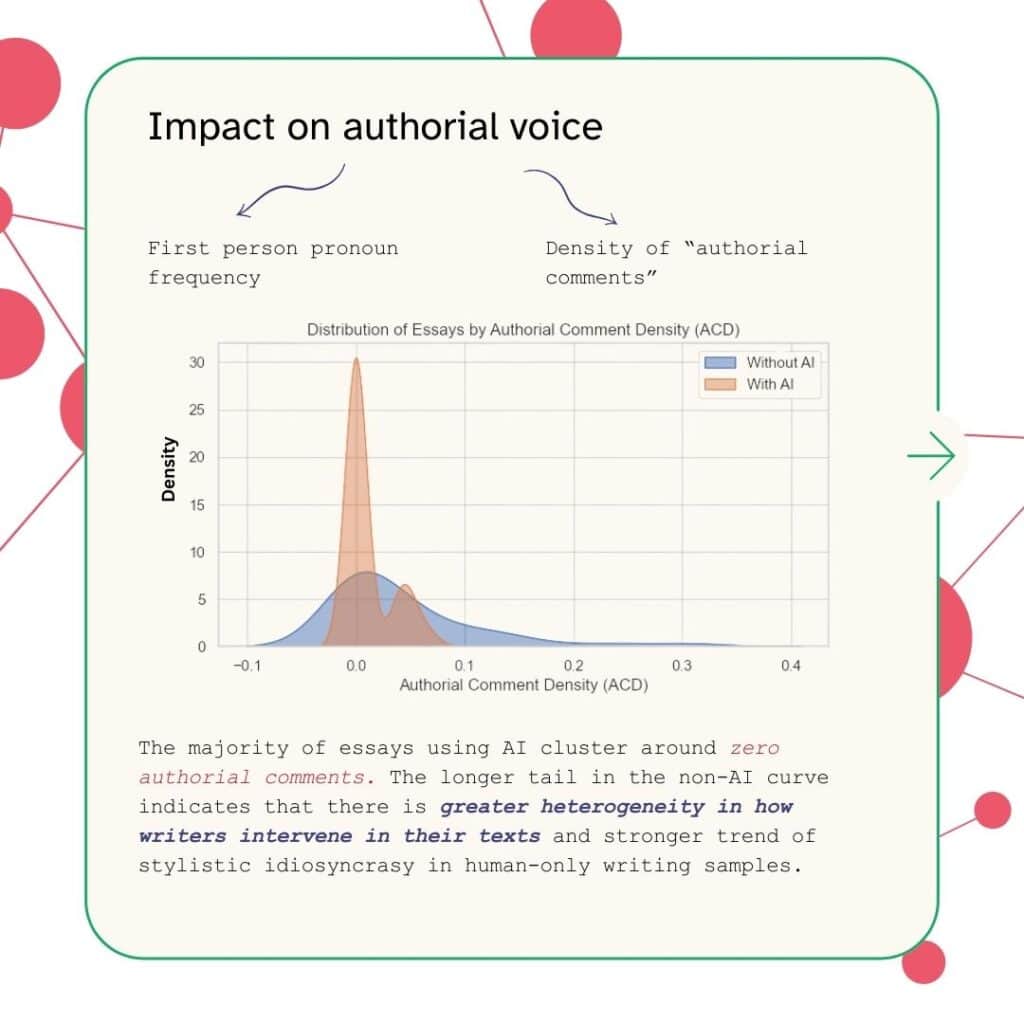

Chat log analysis makes this clear. ELT students tended to use shorter prompts, gave fewer instructions, and did not critically interrogate AI outputs; none used the tool collaboratively across research, drafting, and revision stages. Some non-ELT students, by contrast, engaged more interactively. In nearly half of the logged cases, the AI generated the full essay body with minimal human editing – revealing a concerning tendency toward outsourcing cognitively demanding work rather than refining it. Notably, no students used AI solely to edit independently researched drafts; they either paraphrased AI-generated ideas or relied on fully generated text.

These behavioural patterns carry significant implications. AI can compensate for surface-level weaknesses in grammar and structure, particularly for ELT students, but it may also sidestep the deeper learning processes central to argument development. The study, therefore, moves beyond mapping not only performance shifts but modes of use.

AI’s educational value depends entirely on the depth of human engagement. The competitive advantage lies not in access to AI tools, but in cultivating the intellectual discipline to use them critically: ensuring that technology supports, rather than supplants, scholarly thinking.

– Written by Neerav Dwivedi, Senior Writing Fellow; Archishman Sarker, Senior Writing Tutor; Sampurna Dutta, Senior Writing Tutor, Ashoka University